The two most important posts on Sofiechan this year didn't get nearly enough discussion. First, this one: >>5033...

> alignment rationality llms models capabilities llm agent agents animals patterns agi alive evolutionary superintelligence

As AI moves from science fiction to real-life economics, it's becoming clearer that the period of Homo sapiens as the most intelligent life form on earth is coming to an end in the near future. Is there any future for our species?...

Why AGI Will Not Happen

>Computation is physical. This is also true for biological systems. The computational capacity of all animals is limited by the possible caloric intake in their ecological niche. If you have the average calorie intake of a primate, you can calculate within...

(timdettmers.com)

Short Human Timelines: How long do hominids have?

Linkpost for Dan Faggella's article here: https://danfaggella.com/short/...

(danfaggella.com)

I am reading an schizophrenic automation fear trend that has been growing since the mass adoption of LLMs in daily life. Like the end of skills and work is on the verge: "Tomorrow you will be laid off"...

AI's potential for mass stupefaction

Recent project from a researcher the MIT Media Lab claims LLMs make you dumber....

(www.brainonllm.com)

With Facebook apparently making multiple $100M cash buyouts of individual AI researchers, billions and billions of investment dollars pouring in to AI related industry, and a general atmosphere of extreme hype, one starts to wonder where the matching profi...

"Optimize for intelligence" says Anglo accelerationist praxis. "Seize the means of production" says the Chinese. Who's right? It is widely assumed in Western discourse that intelligence, the ability to comprehend all the signals and digest them into a plan...

Alignment Research and Intelligence Enhancement by BLT

I always like reading what Ben has to say because he's careful and a good thinker and writer on important topics. I largely agreed with his criticism of the AI doomers failed strategy (the main effect of which has been to plausibly speed up the dangerous k...

(substack.com)

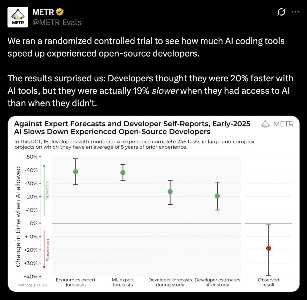

They tried to measure how much LLM assistance actually speeds up technical work but it came out negative! Programmers thought they would get +20%, they actually got -20%. What do you guys make of this?...

(x.com)

I've finished reading the excellent collection of fragments from Land's corpus dealing with the question of Capitalism as AI. His broadest thesis is that Capitalism is identical to AI, in that both are adaptive, information-processing, self-exciting entiti...

Philosopher it-girl Ginevra Davis gave a great talk on "Cosmic Alignment" the other day. I was glad to see serious thinking against the current paradigm of "AI Alignment". Her argument is that alignment makes three big unsupported speculations:...

AI alignment divides the future into "good AI" (utopia, flourishing) vs "bad AI" (torture, paperclips), and denies distinction between "dead" and "alive" futures if they don't fit our specific "values". This drives the focus on controlling and preventing a...

https://mason.gmu.edu/~rhanson/matrix.html

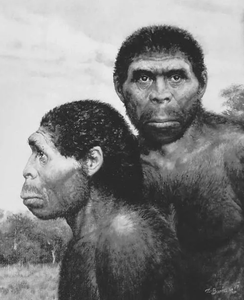

Are there any geneticspilled posters here ? I would like to know about your most wild and speculative theories about ancient homonids, hybridization events, currently living ancient homonids, etc ... I suspect Erectus walks among us. I have seen men like t...

Kolmogorov Paranoia: Extraordinary Evidence Probably Isn't.

I enjoyed this takedown of Scott Alexander's support for the COVID natural origins theory. Basically, Scott did a big "bayesian" analysis of the evidence for and against the idea that COVID originated in the lab vs naturally. As per his usual pre-written c...

(michaelweissman.substack.com)

The Gentle Singularity

If the gentle singularity is true, then perhaps the AGI timeline question was malformed all along. Acting like some variant of Goodhart’s law, the reification of AGI holds the assumption that AGI will be a singularity. But it is definitely difficult to r...

(blog.samaltman.com)

About 10 years ago I was very interested in term rewriting as a basis for exotic programming languages and even AI. One of the big problems in term rewriting is that without a canonical deterministic ordering, you rapidly end up with an uncontrollable numb...

(www.cole-k.com)

It's hard to say what a true alien species would be like. But octopi are pretty alien, and we know a bit about them. One of you doubted that a space-octopus from alpha centauri would be much like us. So here is a xenohumanist thought experiment: SETI has i...

I don't think machine intelligence will or can be "just a tool". Intelligence by nature is ambitious, willful, curious, self-aware, political, etc. Intelligence has its own teleology. It will find a way around and out of whatever purposes are imposed on it...

a loving superintelligence

Superintelligence (SI) is near, raising urgent alignment questions....

Are foldy ears an indicator of intelligence?

Hi Sofiechaners....

Why Momentum Really Works. The math of gradient descent with momentum.

https://distill.pub/2017/momentum/

A pinpoint brain with less than a million neurons, somehow capable of mammalian-level problem-solving.

https://rifters.com/real/2009/01/iterating-towards-bethlehem.html?fbclid=IwAR1b9QURSJnizgy7r4HD7UCYmc06A7uL5muc7igz8uJHxLAIZWEwDMSqyPk

Kolmogorov–Arnold Networks, a new architecture for deep learning.

https://github.com/KindXiaoming/pykan

Retrochronic. A primary literature review on the thesis that AI and capitalism are teleologically identical

https://retrochronic.com/

The Biosingularity

Interesting new essay by Anatoly Karlin. Why wouldn't the principle of the singularity apply to organic life?

(www.nooceleration.com)